What Makes GPTHumanizer AI Trustworthy? Privacy, Reviewability, and Explainability in Real Editing Systems

Summary

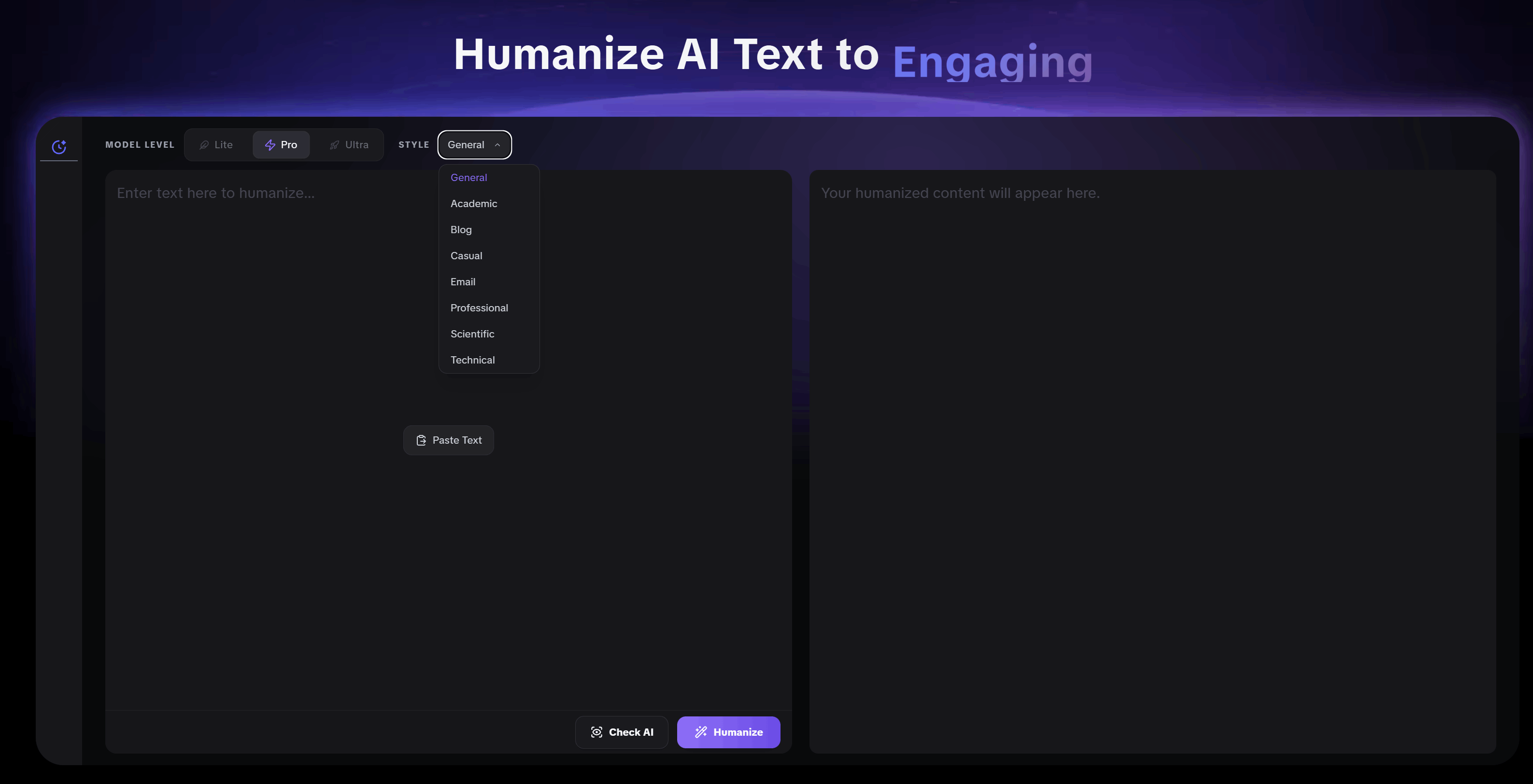

From a workflow perspective, GPTHumanizer AI works best as a content polisher rather than a one-click replacement tool for every kind of text. Lite is better for short, simple content that only needs light humanization. Pro is the most stable option for most real drafts because it improves flow and phrasing without pushing the rewrite too far. Ultra is useful when the goal is to keep the meaning but clean up expression and sentence order more aggressively, though it can go further than desired on fact-heavy or tightly controlled sections.

The article also points out that trust depends on how easy the output is to review. Many rewriters create hidden problems by inserting awkward spacing, spelling issues, or unnatural punctuation in ways that are easy to miss, especially for non-native English users. GPTHumanizer AI stands out because its stronger modes are still framed around readability, phrasing, meaning retention, and user review rather than surface-level tricks.

Built-in feedback is another part of that trust model. Its biggest value is not cosmetic. It helps users quickly see which sentences may still need improvement, making it easier to decide whether to edit manually or run another pass. Even so, the article makes a clear recommendation: manual review is still necessary in all cases.

Overall, the core argument is simple. A trustworthy AI humanizer is not the one that changes the most. It is the one that gives users stable output, controllable rewrite depth, and a clearer review process. By that standard, GPTHumanizer AI makes a stronger case than a generic rewriter.

If I only looked at whether an AI humanizer made a paragraph sound smoother, I would miss the part that actually matters. A polished output is easy to show. Trust is harder to earn.

That is why I do not think this topic should be reduced to “does the rewrite sound more human?” Once you stop testing throwaway examples and start working with real drafts, the standard changes. You begin to care about whether the tool is stable, whether the output is easy to review, and whether the system behaves in a way you can actually understand and control.

That is also why GPTHumanizer AI is more interesting to me than a generic rewriter. If you have already read the technical evolution of AI humanizers, this is really the practical follow-up. The real question is not just whether a tool rewrites. It is whether it is trustworthy when the content actually matters.

A trustworthy AI humanizer is not the one that changes the most

I think this is where a good portion of people still misjudge these tools.

A nice rewrite can look good at first glance. Feeling different, the sentences are different, even the rhythm is different. The output looks less obviously computer-generated. That is not the same as the tool being trustworthy. In practice, the tools that push the hardest are the ones that create the most extra reviewing work.

That is why I would not define a trustworthy AI humanizer as the one that pushes the hard the most. I would define it as the one that offers you the most controllable output.

That is one of the more useful ways to understand GPTHumanizer AI. The real value of Lite, Pro, and Ultra isn’t just that there are three model names on the page, but that they represent three different levels of editorial pressure, and that matters far more than people think.

Lite is the mode I would use when I have a short content, a simple wording, and want only a basic humanization pass. I don’t use it when I want something more advanced or polished. It’s better suited for a light clean up than careful editorial work.

Pro is the most stable overall. That is the one I would believe in the most time. It doesn’t feel as lightweight as Lite, and it doesn’t push as hard as Ultra. In real use, that middle ground is exactly why it works so well. If I want the output to feel improved without making the review stage less reliable, Pro is the safest choice.

Ultra is where the rewrite is pushed harder and more useful when I want to keep the sense but clean up the expression and the sentence order more thoroughly. That can be quite useful, but it is also where I become more careful. If there is something I want to keep more closely, I would first choose Pro. Ultra is useful, but it is also the one where I am most likely to modify something more than I wanted.

That is the first sign of trust for me. A serious AI humanizer should not go to every paragraph assuming the same amount of rewriting is needed. That isn’t how real content works, and a tool becomes far more trustworthy when it respects that.

Privacy matters because real drafts are not disposable

I believe that privacy is talked about in ways that are too vague.

You say it matters, and it does, and the more important question is why it matters in the context of a writing workflow. The answer is simple: When the draft becomes real, privacy is no longer a background problem, and becomes whether a tool feels safe using at all.

An intro to a blog, a paragraph that is client-facing, a product explanation, a draft for content that has internal context, or a section that you built using prompt drafting, all have different sensitivities. So when I think about trust, I don't think separate from the output and workflow surrounding that output.

My comprehensive answer here is simple: Users should not copy and paste in whole prompt-style chunks, and expect everything to always be handled correctly just because the system thinks it can distinguish between those patterns. GPTHumanizer AI does seem to do a better job of detecting those cases than a generic product would, but there are edge cases, and that's why I wouldn't treat it like a magic box.

I wouldn't either give the humanizer the short bullet fragments that are a few words each, and expect the product to always produce a stable output. When the input is too short and skeletal, the output can be less stable. That doesn't mean the product is weak. It means the product works best when used for the job it is designed for.

So that is an important distinction in this space. I believe that GPTHumanizer AI is best thought of as a content polisher, not necessarily a shortcut for any possible shape of text. Once you think about it that way, the trust story looks more realistic. The product is designed to polish usable drafts, not to always rescue extreme input angles with equal success.

Reviewability is where GPTHumanizer AI actually proves itself

If I were to choose the most critical part of this article, it would be this one

Many humanizers disappoint, not because they don't transform the text, but because they make the final review step more stressful than it should be. You get a more dramatic rewrite, but then you have to spend additional time looking for whether the meaning has shifted, whether the tone still works, whether a detail has been dumbered, or whether a paragraph now sounds odd in a way that is pretty easy to miss.

I run into this a lot with other rewriters out there. Some of them seem to be playing the detector game by introducing deliberate little errors or odd artifacts in the rewrite. It might be an extra space, a misspelling, or an odd punctuation choice. They’re easy to miss, and even easier to miss for non-native English speakers. That’s one of the quickest ways I can feel that a tool no longer feels trustworthy.

This is where Pro shines. For the most part the tool probably gives me the most consistent output across the three modes. It doesn’t feel like the output is too light, and it doesn’t overpush the rewrite. That means it’s easier to sign off.

I still do my own review, though. I wouldn’t say trust means blind acceptance. I would say trust is the tool gives me a result with fewer reasons to worry and a more solid base to work from. With GPTHumanizer AI that’s usually what happens when I use Pro. I still read the output and gently flag sections I think could use more work, but I’m not spending time hunting for weird synthetic errors that shouldn’t have been there in the first place.

That’s a much bigger advantage than it seems. A trustworthy AI humanizer should reduce friction, not relocate it to hidden corners.

Explainability in real editing systems is about knowing what to re-check

I don’t think that explainability at writing tools should be overcomplicated.

Most users don’t need a lecture on the model internals. What they need is a clearer understanding of what changed, why it changed, and where they still need to look before publishing.

That is the version of explainability that matters in this scenario.

When I use GPTHumanizer AI, it actually works best when it helps me understand what you are editing at the writing level. Did it smooth over a kind of rigid phrasing? Did it reduce repetition? Did it make the sentence flow more naturally? Did it reorder the ideas more cleanly without changing the argument? Those are the kind of questions you want to be able to answer during the review step.

That is when the built-in feedback actually starts to be useful, too. The biggest advantage is not that it produces another flashy control layer for the user in the interface. The real advantage is that it makes it easier to see which sentences might still need more work. That puts the user in a better position to determine if they want to edit manually or run a second pass.

That is quite important in practice. A tool becomes more trusted when it does not simply spit out output and make the user start guessing. If it helps the user see where the text still feels wrong, the workflow becomes more transparent and more controllable.

That is one of the reasons this tool doesn’t feel like a template rewriter but rather an editing system. Explainability, at least in this case, is not about showing off the model. It is about helping the user understand the output well enough to make a good editorial decision.

Why GPTHumanizer AI feels more credible in practice

What feels stronger about GPTHumanizer AI is not one feature or one tagline. It is the fact that the product logic makes sense a long way down when you consider how people really rewrite with an AI humanizer.

Many of the tools out there still engage in the classic weakness. You make the rewrite dramatic, claim it is more human, and then you’re done. That’ll work on the landing page, but it’s much less persuasive when you’re editing real content. If the rewrite is too aggressive, you’re less able to review it. If the product says nothing about how to evalute the outcome, you’re on your own brain. If the brand promise is broader than the workflow can realistically deliver, trust goes through the wall.

GPTHumanizer AI works better because it feels more geared to the reality of editing. The rewrite-depth setup offers reviewability, because not every paragraph needs the same level of markup. The framing around flow, readability, phrasing and meaning playback make the edit easier to appreciate, which makes the tool feel more explaible in use. And the overall tone of the product is less generic than many rewriters because it makes humanization a matter of controlled progress instead of a gratuitous one-click magic fix.

That is the real point to me. GPTHumanizer AI feels stronger because you can see how it delivers its claims, not because it claims it delivers its claims. Once a tool starts to feel consistent at that level, it starts to feel less like a template-brand rewriter and more like something you can actually use on work that matters.

Conclusion

I would never trust an AI Humanizer simply because it could make one paragraph sound less mechanical. That is a very shallow bar.

The tools that are worth using are those that make real editing controllable. They're the ones that provide the model you can rely on when you need it. The ones that make review more useful instead of hiding loss in the output. The ones that let you understand what's still rough. And the ones that act like tools designed for edit drafts, not demo text.

So that's why I feel, here, there's a real case for GPTHumanizer AI.

Not because a big rewrite should be a big thing, and not to discourage review, but because the action is a good one when approached as a content polisher with a controllable depth of work, a review logic that makes sense, and a realistic approach to trust in real editing systems.

FAQ

Which GPTHumanizer AI mode should most people use?

For most real writing tasks, Pro is usually the safest choice. It is the most stable option overall and gives a better balance between natural rewriting and meaning control. Lite is better for short, simple content that only needs light cleanup, while Ultra is better for stronger rewriting when the draft feels too flat or mechanical.

Do I still need to review the output manually of GPTHumanizer AI?

Yes. Manual review is still necessary in every case. Even when the output is stable, it is still important to check sections that contain precise claims, sensitive phrasing, or parts that may need additional refinement before publishing.

What kind of input does GPTHumanizer AI handle less well?

It works less reliably on extremely short bullet-style inputs that only contain a few words per line. It is also not a good idea to paste in raw prompt-style text and expect perfect results every time. GPTHumanizer AI is strongest when it is used to polish a usable draft, not when it is forced to reconstruct meaning from very thin input.

What makes GPTHumanizer AI feel more trustworthy than many other rewriters?

The biggest difference is that it feels more controllable. Instead of forcing every draft through the same rewrite pressure, it gives users different levels of editing depth. It also avoids the kind of artificial errors that some tools introduce, such as awkward spacing, spelling issues, or strange punctuation that can be easy to miss during review.

What is the real benefit of the built-in feedback of GPT Humanizer AI?

Its biggest benefit is that it helps users spot which sentences may still need work. That makes it easier to decide whether to adjust the wording manually or run another humanization pass. It supports the review process instead of leaving users to guess what still feels off.

Is GPTHumanizer AI best used as a full rewriter or as a content polisher?

It is best understood as a content polisher. It works well when the draft already has usable meaning and structure, and the goal is to improve flow, phrasing, readability, and naturalness without losing control of the final result.

Is privacy only a legal concern, or is it part of the product experience?

It is part of the product experience. Once users are working with real drafts instead of throwaway examples, privacy becomes part of whether the tool feels safe to use at all. That is why responsible input habits still matter, especially when the content includes sensitive or high-stakes material.

Related Articles

Stress Testing GPTHumanizer AI: How to Judge Rewrite Stability on Real Drafts

See how GPTHumanizer AI handles long drafts, exact claims, technical sections, and product details w...

How to Use GPTHumanizer for Emails, Follow-Ups, and LinkedIn Posts Without Sounding Robotic

Learn how to use GPTHumanizer for emails, follow-ups, and LinkedIn posts without sounding robotic, o...

How to Use GPTHumanizer for Blog Posts Without Losing Your Brand Voice

Learn how to use GPTHumanizer for blog posts without losing brand voice, opinion strength, or senten...

How to Use GPTHumanizer on Long Drafts Without Losing Consistency

Learn how to use GPTHumanizer on long drafts without losing consistency, structure, or voice by edit...