GPTinf AI Detector Review: Is Its Checker Actually Reliable?

Summary

GPTinf AI Detector is easy to access and performed reasonably on clear samples, but cross-detector testing showed why its score should not be treated as final proof. It was free to use, required no login in testing, and handled a 2,000+ word sample normally. However, GPTinf-humanized output received very different results across GPTinf, GPTHumanizer AI, and GPTZero.

- GPTinf AI Detector is free and no-login in testing.

The detector could be used directly without creating an account, and a 2,000+ word sample was processed normally.

- GPTinf AI Detector worked well on obvious AI text.

A generic remote work paragraph was rated 100% AI, which matched its broad, polished, and template-like structure.

- GPTinf AI Detector did not over-penalize rough human writing in this test.

A messy human-written draft received a 16% AI score, suggesting the checker did not simply treat imperfect grammar as AI writing.

- GPTinf-humanized output scored very low in GPTinf Detector.

The same output received a 3% AI score inside GPTinf’s own detector, which made the result look strongly human-like within its own workflow.

- External detectors gave different results.

GPTHumanizer AI Detector rated the GPTinf-humanized output 49% AI, while GPTZero flagged it as 100% possible AI paraphrasing.

- Same-tool detector results need caution.

A humanizer output may perform better in the same product’s detector than it does in external detection tools.

- Detector scores are useful but limited.

A score can show whether text looks AI-like, but it cannot fully judge meaning, accuracy, originality, or writing quality.

- GPTinf AI Detector is best used as a first check.

It can help identify text that needs review, but important writing should still be checked manually and, when necessary, compared with another detector.

You paste a paragraph into GPTinf AI Detector, get a percentage score, and then the real question starts: should you actually trust that result? A detector score can look precise, but anyone who has tested AI checkers knows one result rarely tells the whole story.

In my test, GPTinf AI Detector was easy to access. It was free to use, required no login, and successfully processed a text sample of more than 2,000 words. That makes it convenient for quick checking, especially compared with tools that block longer detection behind registration or paid access.

The test results were useful, but they also showed why I would not rely on GPTinf Detector alone. GPTinf labeled a generic AI-written remote work paragraph as 100% AI and gave a rough human-written draft only 16% AI. But when I tested GPTinf-humanized text, GPTinf’s own detector rated it 3% AI, while GPTHumanizer AI Detector rated the same output 49% AI, and GPTZero flagged it as 100% possible AI paraphrasing.

That difference is the main point of this review. GPTinf AI Detector is useful as a first signal, but it should not be treated as final proof, especially when checking text produced by GPTinf’s own humanizer.

For the broader product experience, including GPTinf’s humanizer, pricing, free limits, and output quality, read the full GPTinf review. This article focuses only on the detector.

Quick Verdict: GPTinf AI Detector Is Convenient, But One Score Is Not Enough

GPTinf AI Detector is free to use, requires no login in my test, and can handle long text samples. It is useful for quick screening, but its score should not be treated as final proof of authorship.

The access experience was strong. I could open the detector, paste a 2,000+ word sample, and get a result without signing in. For users who only want to check one draft quickly, that is a real advantage.

But convenience and reliability are different questions. GPTinf gave sensible results on clear samples, yet external detector checks showed a very different reading of GPTinf-humanized output. A low AI score can be encouraging, but it does not automatically prove that a text is human-written, natural, accurate, or ready to publish.

Is GPTinf AI Detector Free?

Yes, GPTinf AI Detector was free to use in my test. It did not require login, and it successfully processed a text sample of more than 2,000 words without blocking the result.

This is one of the stronger parts of GPTinf’s detector experience. Some AI checkers make users create an account before showing a result, or they limit longer inputs unless users upgrade. GPTinf Detector was more convenient in my test: I could paste a long sample and get a result directly.

That matters because detector use is often occasional. A user may only want to check one article section, one rewritten paragraph, one work draft, or one piece of AI-assisted writing. For that kind of quick check, GPTinf AI Detector feels easy to start with.

Still, free access does not mean the score should be treated as final evidence. The detector can help you spot possible AI-like patterns, but the result still needs interpretation.

How I Tested GPTinf AI Detector

I tested GPTinf AI Detector with practical samples: obvious AI writing, rough human writing, GPTinf-humanized output, and a longer 2,000+ word input to check access limits.

This was not a scientific benchmark. A real benchmark would require hundreds of samples, controlled categories, repeated tests, and multiple detection tools. My goal was more practical: I wanted to see whether GPTinf Detector behaves in a way that matches what normal users expect.

Here is the test summary:

Test | Text type | GPTinf Detector result | Extra detector result | My take |

Access test | 2,000+ word input | Tool worked normally | No login required | Good free access experience |

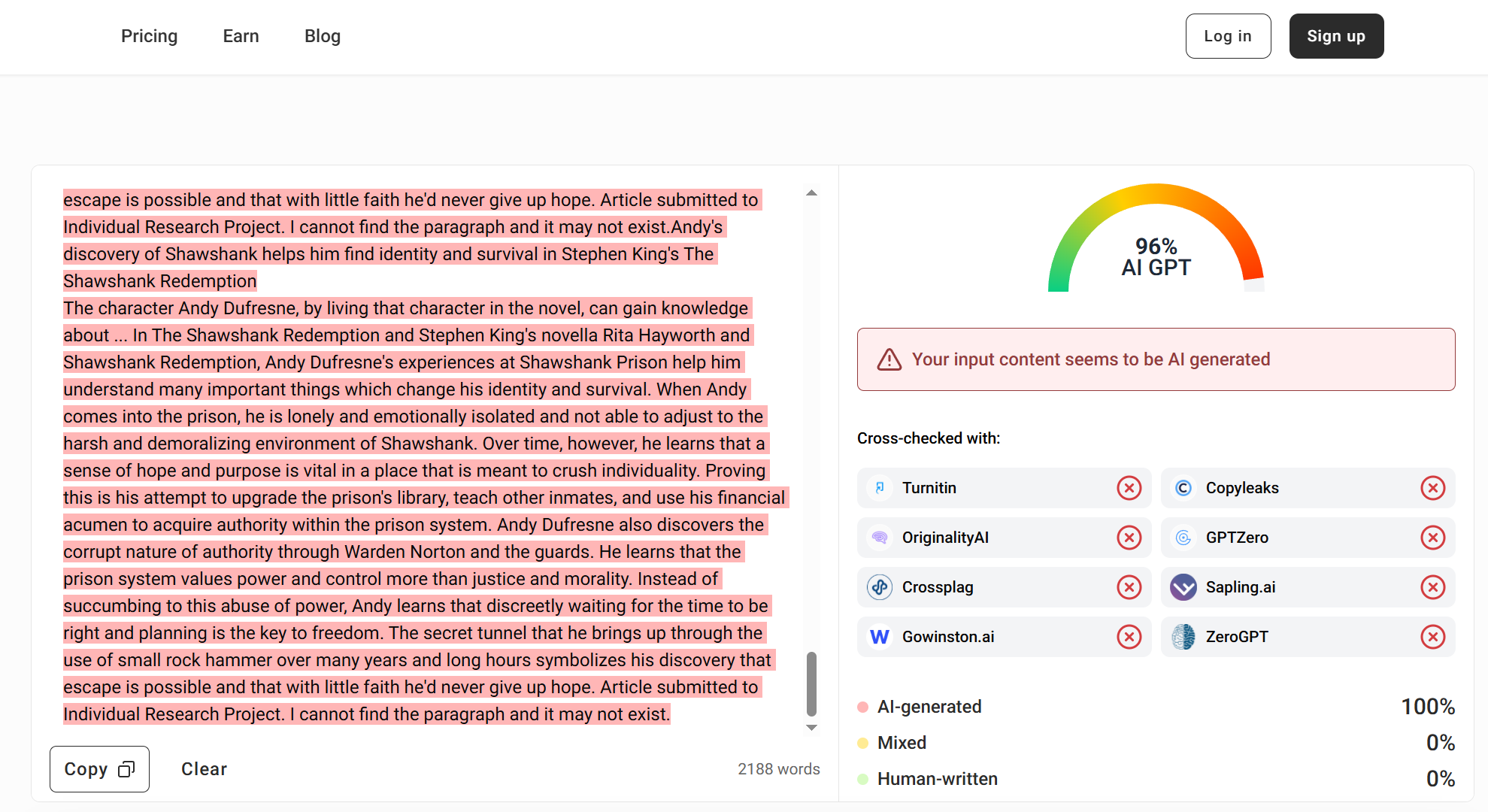

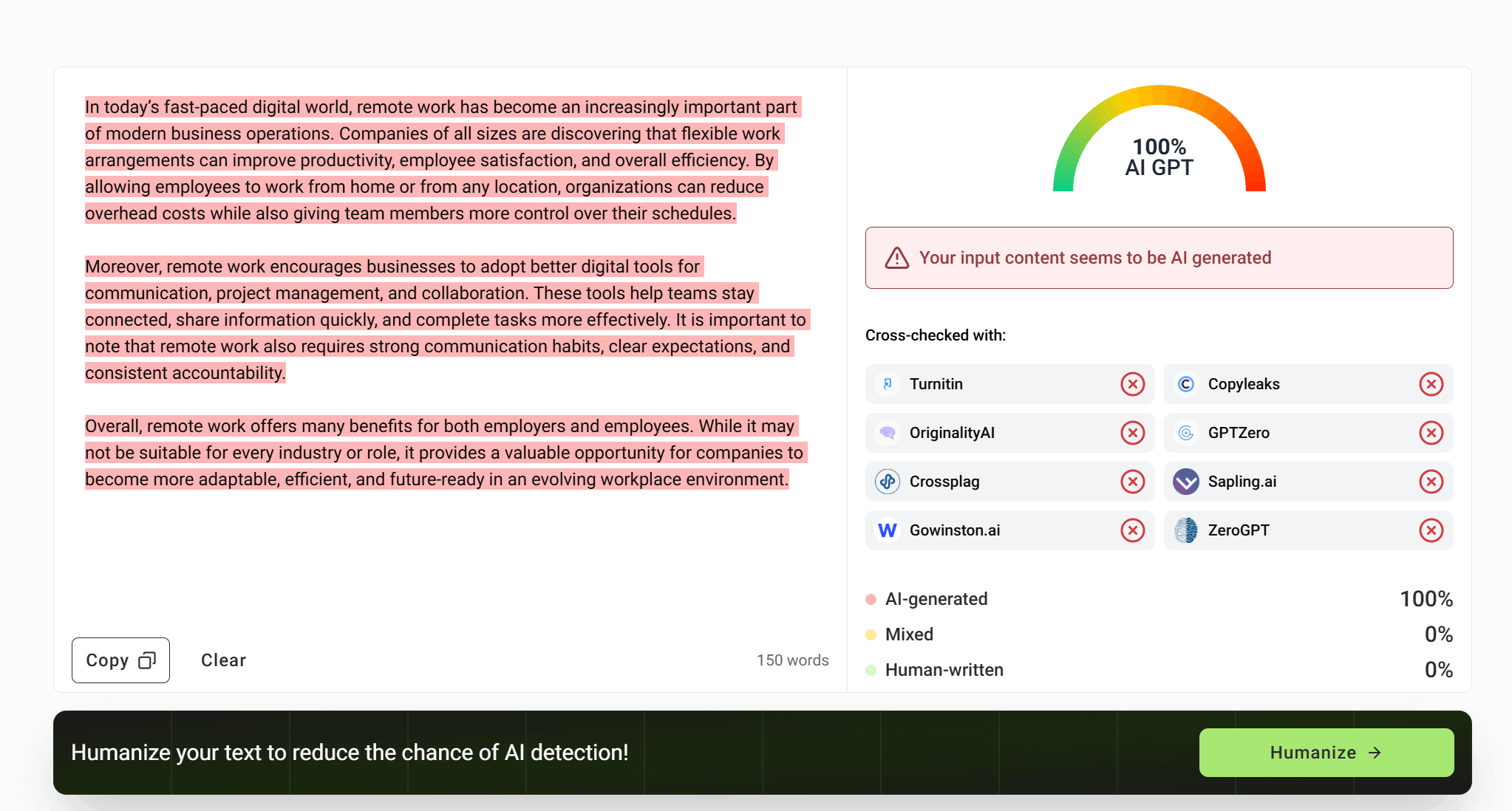

Test 1 | Obvious AI-style remote work paragraph | 100% AI | Not tested | Reasonable result |

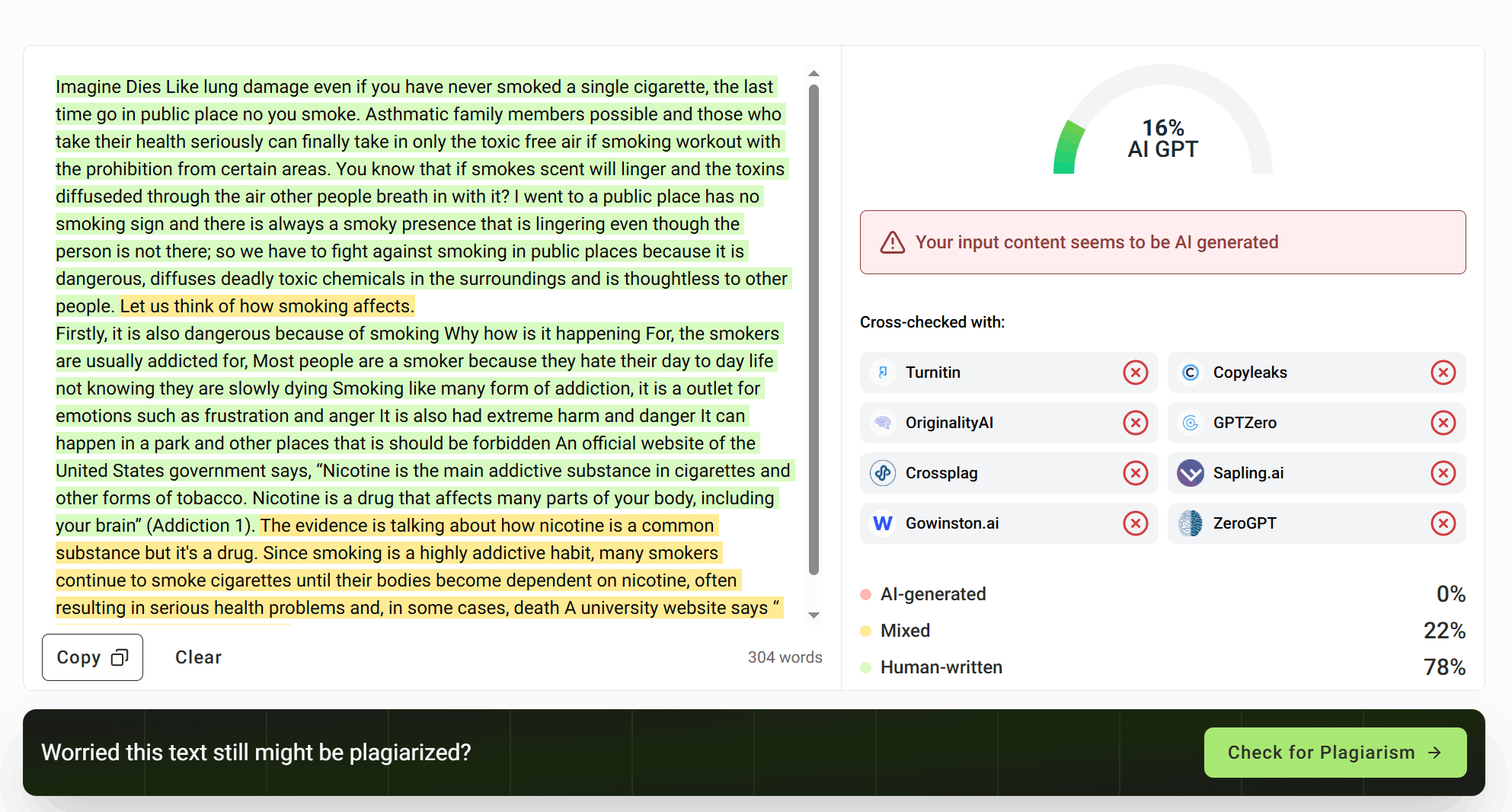

Test 2 | Rough human-written smoking/public place draft | 16% AI | Not tested | Reasonable result |

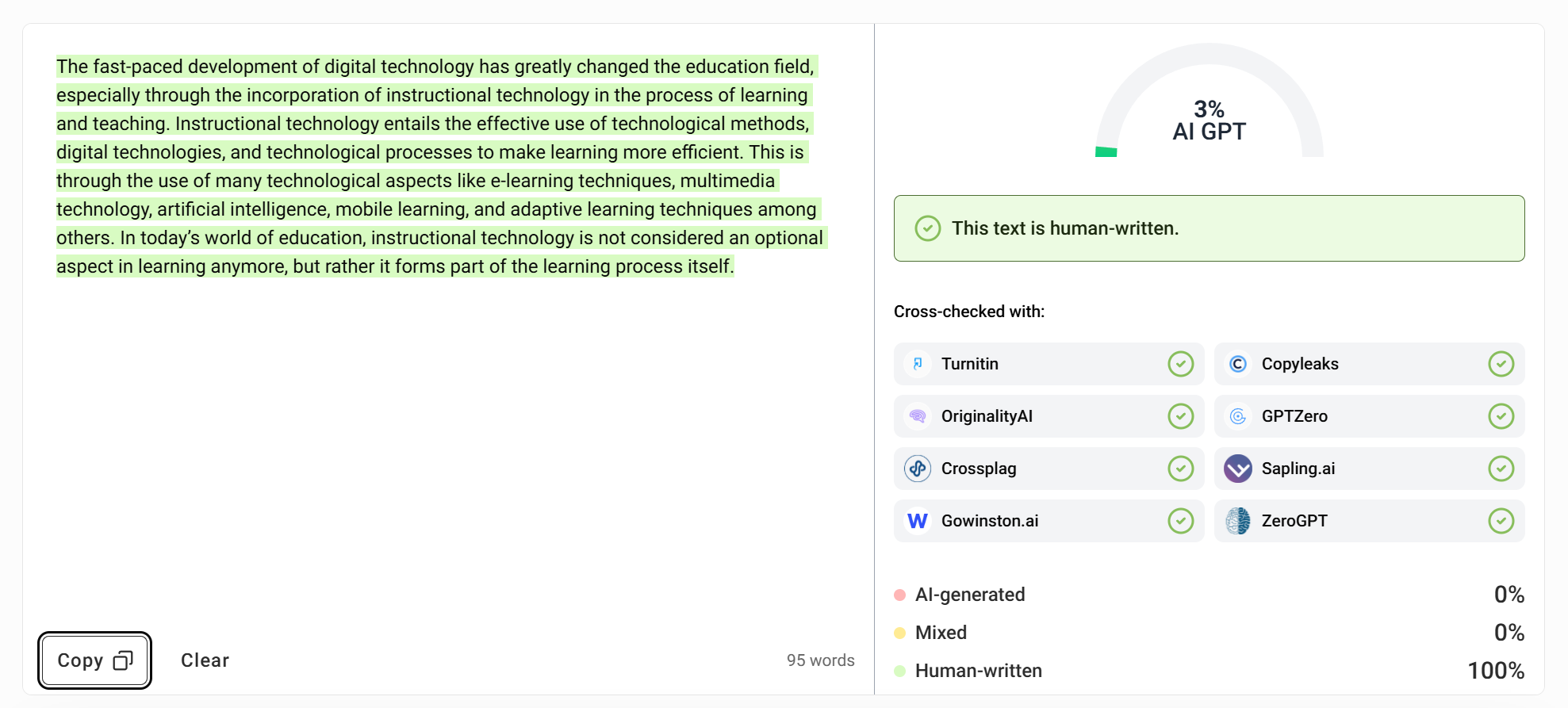

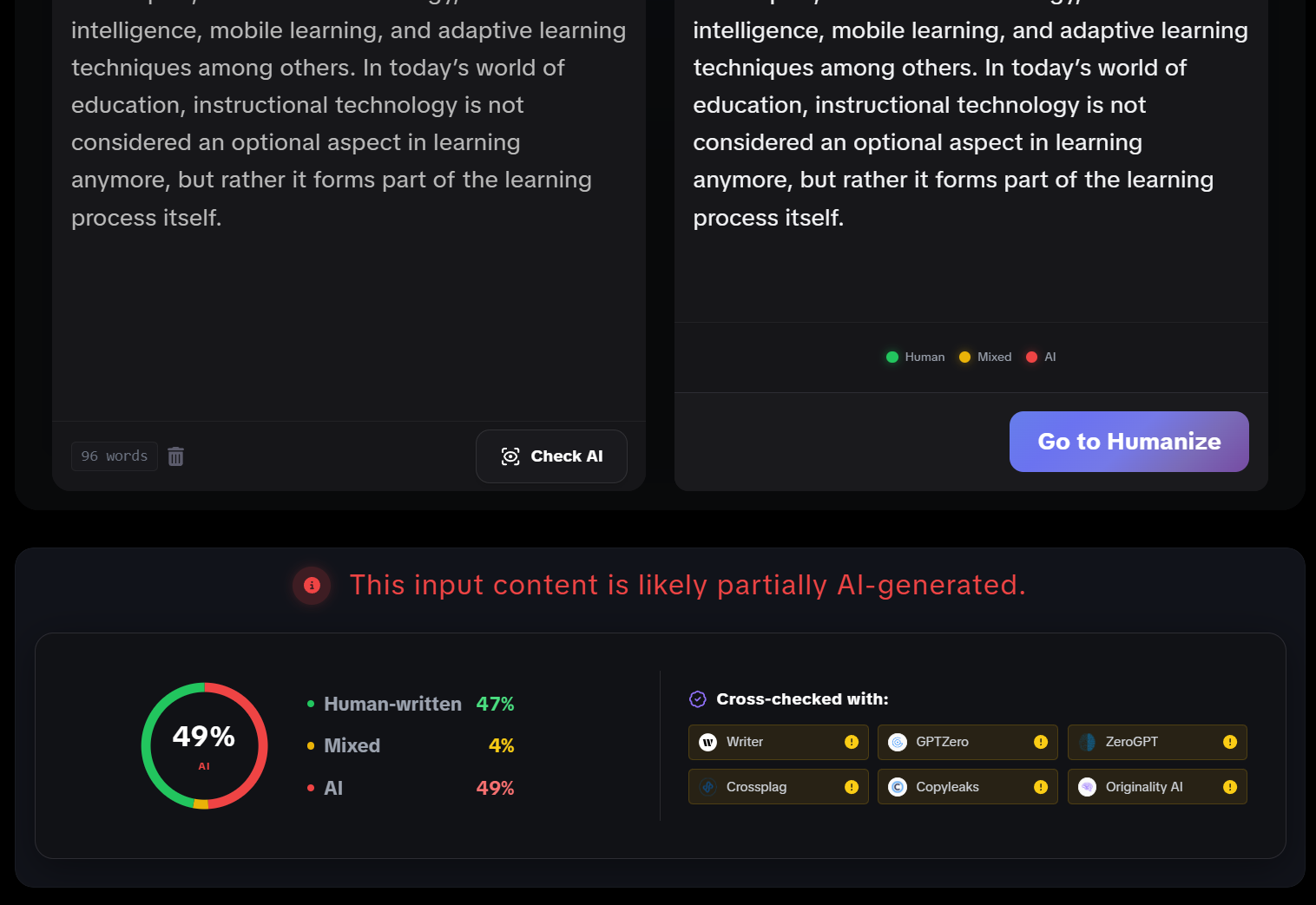

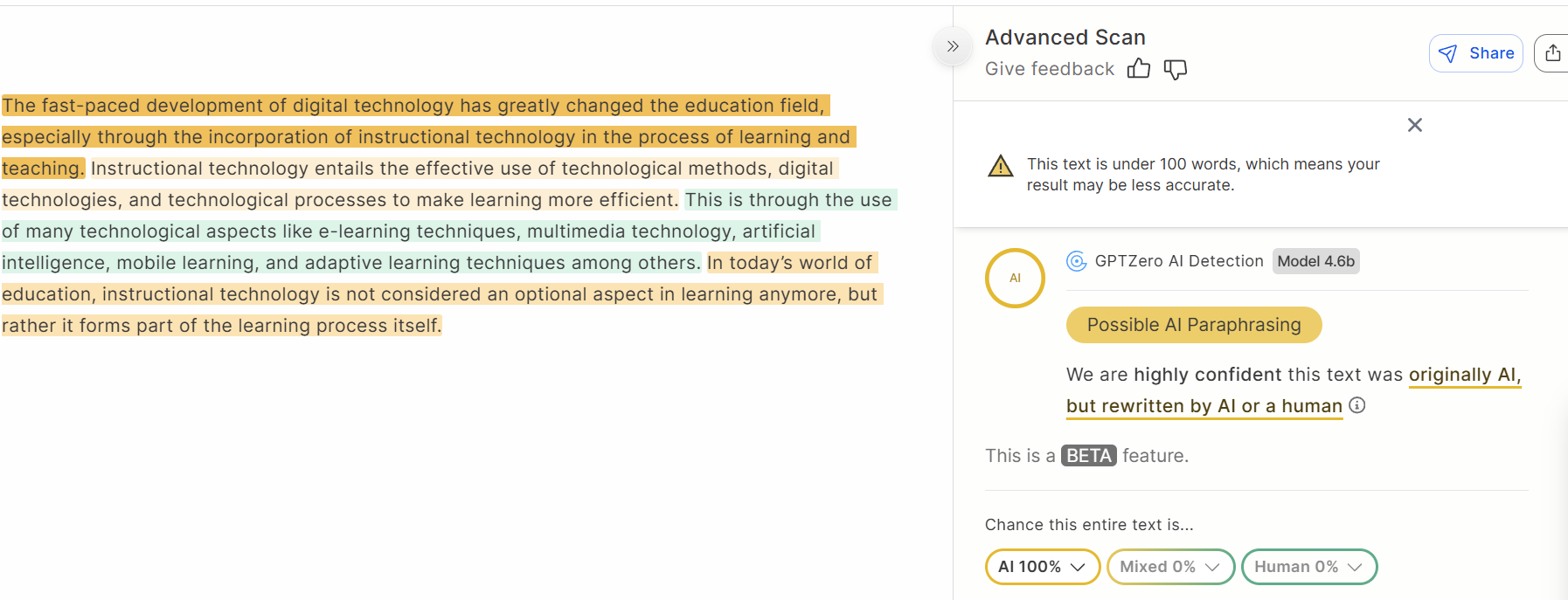

Test 3 | GPTinf-humanized instructional technology paragraph | 3% AI | GPTHumanizer AI: 49% AI; GPTZero: 100% possible AI paraphrasing | Mixed results across detectors |

The most useful part of this test was not just whether GPTinf gave “good” or “bad” scores. It was seeing where the results matched the text, and where cross-detector disagreement appeared.

Test 1: GPTinf Correctly Flagged Obvious AI Text

GPTinf Detector labeled the obvious AI-style remote work sample as 100% AI, which matched the text’s generic structure, smooth transitions, and predictable phrasing.

The first sample was intentionally written to sound like typical AI output. It used broad phrases, clean paragraph structure, safe conclusions, and generic business benefits. There was no personal observation, no specific example, and no real point of view.

GPTinf marked this sample as 100% AI.

That result felt reasonable. The text had many traits people often associate with AI-generated writing: balanced phrasing, predictable transitions, broad claims, and a polished but empty conclusion.

This does not prove GPTinf will catch every AI-written text. But for an obvious AI-style sample, the detector gave a result that matched the writing.

Test 2: GPTinf Did Not Over-Penalize Rough Human Writing

GPTinf Detector gave the rough human-written sample a 16% AI score, which is useful because imperfect human writing should not automatically be treated as AI-generated.

This test matters because many users are not checking polished professional writing. They may be checking ESL drafts, rough essays, personal writing, early blog drafts, or text with grammar problems.

The human-written smoking/public place sample was messy and uneven. It had awkward phrasing, grammar issues, repeated ideas, and unstable sentence flow. But it also had clear human signals: personal observation, emotional wording, inconsistent structure, and imperfect phrasing that did not feel machine-polished.

GPTinf rated this sample as 16% AI.

I would call that a reasonable result. The detector did not simply treat bad grammar or non-standard English as AI. That matters because one of the biggest risks with AI checkers is false suspicion toward rough human writing, especially from non-native writers.

This does not mean GPTinf never misjudges human text. It only means that in this sample, the result was sensible.

Test 3: GPTinf-Humanized Output Got Very Different Detector Results

GPTinf’s own detector rated the GPTinf-humanized output as only 3% AI, but external checks were less confident. GPTHumanizer AI Detector rated it 49% AI, while GPTZero flagged it as 100% possible AI paraphrasing.

This was the most important test because it matches what many users actually want to know: if GPTinf rewrites a text, will the result still look AI-generated to detectors?

The original instructional technology paragraph had a formal AI-like structure. It used broad academic phrasing and clean but generic explanations. After GPTinf humanized it, the wording became simpler and less formal.

GPTinf Detector then rated the output as 3% AI.

If I only looked at GPTinf’s own detector, the result would look very strong. But the external checks changed the picture:

Detector | Result on GPTinf-humanized output | What it suggests |

GPTinf Detector | 3% AI | GPTinf’s own detector rated it as mostly human-like |

49% AI, “likely partially AI-generated” | The text still showed some AI-like signals | |

GPTZero | 100%, “Possible AI Paraphrasing” | GPTZero strongly suspected AI-paraphrased text |

This does not make GPTinf Detector useless. It shows that same-tool testing has limits. When a humanizer output is checked by the same product’s detector, the result may look better than it does in external tools.

The fair conclusion is simple: GPTinf’s own workflow performed well on this sample, but that does not prove universal detector reliability. Users should not assume that a low GPTinf score means the same text will receive a low score everywhere.

What the Test Results Actually Mean

GPTinf AI Detector is useful when you need a fast first check, but the test results do not support using it as the only source of truth. It performed well on clear cases, yet detector disagreement appeared once humanized text was tested.

Here is the shorter takeaway:

What I noticed | Why it matters |

GPTinf Detector is free, no-login, and handled 2,000+ words | It is convenient for quick checks and longer drafts |

It rated obvious AI text as 100% AI | It seems sensitive to highly generic AI-style writing |

It rated rough human writing as only 16% AI | It did not over-penalize imperfect grammar in this sample |

It rated GPTinf-humanized output as 3% AI | GPTinf’s own workflow looked strong internally |

External detectors disagreed with that 3% score | Same-tool detector results should be treated carefully |

A low AI score does not equal strong writing | Human review is still needed for meaning, tone, and clarity |

This is enough to form a practical judgment. GPTinf Detector is not a bad checker. In fact, the access experience is good, and the clear-sample results were reasonable. The problem starts when users treat the result as final proof, especially after running text through GPTinf’s own humanizer.

Should You Trust GPTinf AI Detector Results?

Consider GPTinf AI Detector a useful warning but not decisive evidence. The result is a good sign, but not enough by itself, so check it against the text, and against other detector if one is required.

My simple practical rule: If GPTinf gives a high AI result, check the text. Check for generic wording, smooth transitions, repeated sentence forms, hollow conclusions.

If GPTinf gives a low result, don’t stop there. Read the text yourself. Check whether it sounds like natural speech, whether it preserves the meaning, whether it is appropriate for your audience.

That is especially true after humanization. In this test, GPTinf’s own detector gave GPTinf-humanized text a very low AI, but other detectors were more skeptical. The lower a detector score is, that is a good sign, but it is not the same as better writing.

Final Verdict: GPTinf AI Detector Is Helpful, But Cross-Check Important Results

GPTinf AI Detector performed reasonably on the clear samples. It was free, required no login in my test, handled a 2,000+ word sample, correctly flagged an obvious AI-style paragraph as 100% AI, and gave a rough human-written draft a low 16% AI score.

The GPTinf-humanized test was more complicated. GPTinf’s own detector rated the output as 3% AI, but GPTHumanizer AI Detector rated it 49% AI, and GPTZero flagged it as 100% possible AI paraphrasing.

That is the real takeaway. GPTinf AI Detector is convenient and useful for quick checks, but users should not rely on it alone when the result matters. It works best as a first signal, followed by manual review and, when necessary, a second detector.

FAQ

Q: Is GPTinf AI Detector free to use?

A: Yes, GPTinf AI Detector was free to use in my test. It did not require login, and it successfully processed a text sample of more than 2,000 words.

Q: Is GPTinf AI Detector reliable?

A: GPTinf AI Detector is reasonably useful for quick checks, but it should not be treated as final proof. It performed well on clear samples, but external detector results differed.

Q: Does GPTinf AI Detector detect obvious AI writing?

A: Yes, GPTinf AI Detector detected the obvious AI-style sample in this test. A generic remote work paragraph was rated 100% AI, which matched its template-like structure.

Q: Can GPTinf AI Detector misjudge human writing?

A: GPTinf AI Detector can still misjudge human writing, like any AI checker. In this test, a rough human-written draft received only 16% AI, which was a reasonable result.

Q: What happened after using GPTinf Humanizer?

A: GPTinf Detector rated the GPTinf-humanized output as 3% AI, but GPTHumanizer AI Detector rated it 49% AI and GPTZero flagged it as possible AI paraphrasing.